The official collection for our paper LoraHub: Efficient Cross-Task Generalization via Dynamic LoRA Composition, from Chengsong Huang*, Qian Liu*, Bill Yuchen Lin*, Tianyu Pang, Chao Du and Min Lin.

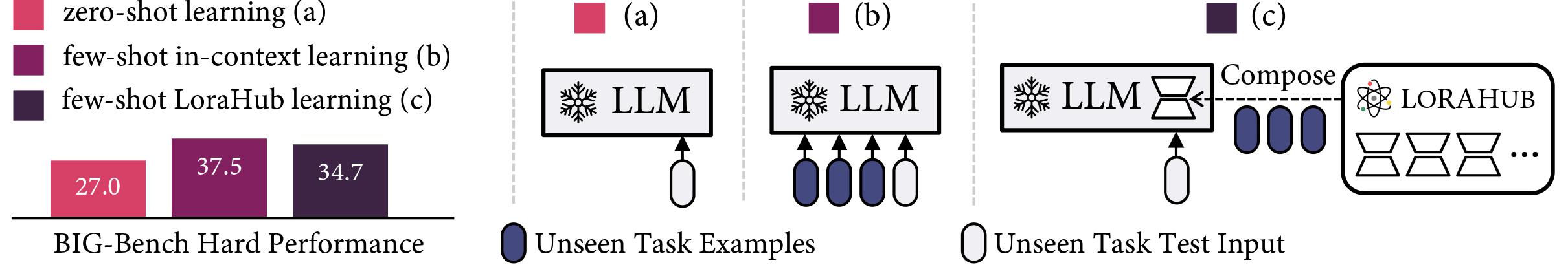

LoraHub is a framework that allows composing multiple LoRA modules trained on different tasks. The goal is to achieve good performance on unseen tasks using just a few examples, without needing extra parameters or training. And we want to build a marketplace where users can share their trained LoRA modules, thereby facilitating the application of these modules to new tasks.

- Code: https://github.com/sail-sg/lorahub

- Install: pip install lorahub